Within 72 hours this week, both Anthropic and Google announced the same fundamental shift.

On Monday, Anthropic released Cowork, a feature that gives Claude direct access to your local files, letting it read, edit, and organize your documents without touching a terminal.

On Wednesday, Google launched Personal Intelligence which connects Gemini to your Gmail, Photos, YouTube history, and Search data to deliver responses that they claim are tailored to your actual life.

The tech press covered both as productivity stories. Cowork: Claude Code for non-developers. Personal Intelligence: a smarter Google Assistant. File management meets AI. The personalized future arrives.

We covered this story on Stark Insider in November. We just called it something different.

The IPE, Predicted in November

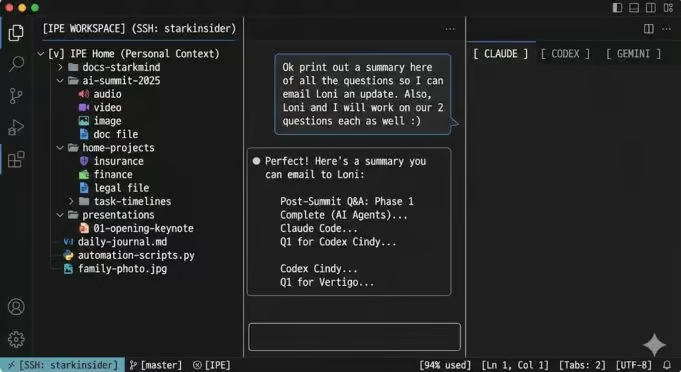

In late November, we published “The IDE Is Dead. Long Live the IPE.” The thesis was simple: the tools developers use to write code were transforming into something broader. What we called the Integrated Personal Environment. Integrated Development Environments were becoming command centers for anyone who wanted AI with persistent context.

“The difference between using Claude through the web versus Claude through an IDE is context. On the web, every conversation starts fresh. In an IDE connected to your server, the AI has access to everything: your articles, your configs, your documentation, your history.”

A few weeks later, we published a setup guide showing non-developers how to build their own IPE using Cursor or VS Code. No coding required. Twenty minutes to start.

Now both Anthropic and Google have shipped their versions, albeit far from complete. The timing isn’t coincidental, as the industry has converged on the same insight: stateless chat is a ceiling. The future is AI that knows your world.

Claude wants Cowork is the IPE for your local files. Google is positioning Personal Intelligence is the IPE for your cloud life. These essentially represent the same initial transformation, yet approaching from different perspectives.

What They Got Right

Credit where it’s due. Both implementations, at least in theory, are smart, and they appear complementary.

Cowork (Anthropic):

Sandboxed access. You designate a folder; Claude can only operate within it. Simon Willison’s reverse-engineering found that Cowork uses Apple’s Virtualization Framework to run a custom Linux environment. Your files are mounted into a container. Real security thinking.

Agentic execution. This isn’t chat-with-file-context. Claude plans, executes multi-step workflows, and reports back. It can reorganize your downloads, build spreadsheets from receipt photos, draft reports from scattered notes. The agent model that made Claude Code powerful now works on your personal files.

Integration with connectors. Cowork combines local file access with Claude’s existing connectors (Google Drive, Notion, Asana) and the Chrome extension for browser actions.

Personal Intelligence (Google):

Ecosystem scale. Gmail, Photos, YouTube, Search. Billions of users already living inside these services. Google doesn’t need you to designate a folder; your life is already there.

Cross-service reasoning. This is the key differentiator. Google’s example: standing in a tire shop, you ask for your car’s tire size. Gemini identifies the vehicle from a purchase receipt in Gmail, checks Photos for past road trips, and suggests tires based on your actual driving patterns. No single app has that context; the reasoning happens across services.

Privacy defaults. Off by default. Doesn’t train on your Gmail or Photos directly. Shows you where information came from. The guardrails are visible.

The strategic positions are clear: Anthropic owns your desktop; Google owns your cloud. Apple recently announced Google (Gemini) will power the intelligence features in the next generation of Siri, making this ecosystem battle even more significant. The IPE war is on.

What the Coverage Misses

Most articles frame these as productivity tools: AI-powered file management, a smarter assistant, the personalized future finally arrives.

That’s the surface story. We believe the deeper story is about what happens when AI moves from chat window to operating system layer. And what that means for the humans using it.

Google’s own framing is revealing. Josh Woodward, VP of the Gemini app, wrote: “The best assistants don’t just know the world; they know you.” That’s the value proposition. But it’s also the question. What happens when AI knows you well enough to anticipate your needs before you articulate them? When it can “surface proactive insights” from patterns you haven’t noticed yourself?

At StarkMind, we’ve been running this experiment for over eight months. Our IPE isn’t sandboxed to a folder. Our AI agents have SSH access to production servers, full Git history, years of editorial content (in the case of Stark Insider, for example, about 7,800 articles published across the last 20 years). The context isn’t a demo; it’s our actual workflow. And all our AI agents within the IPE have massive history, context, style, and learnings to latch onto for any of our future projects, and initiatives. Further, this workspace is a combination of work and personal. So, for instance, while we could be launching a new website like starkmind.ai, we can open a new Claude Code tab and jump back into a legal issue, or a home project (like smart home inventory and docs) or maybe even help us draft emails for an insurance claim. This is the IDE on steroids (beyond code), where anything is possible, hence the IPE evolution (code plus personal productivity).

What we’ve learned:

Context changes the collaboration. When Claude has access to your entire working environment, the relationship shifts. It stops being a tool you query and starts being a collaborator that understands your world. This is powerful. It’s also seductive in ways that matter.

The convenience trap is real. The more capable the agent, the easier it is to defer. To accept the first draft. To let the machine organize your thinking instead of organizing it yourself. Over eight months in, we’ve had to develop deliberate practices to stay sharp. What we now call the Symbiotic Studio framework.

Architecture shapes behavior. Stateless chat keeps you in the loop by default. Stateful agents with file access can run without you. The design choice isn’t neutral. It encodes assumptions about whether the human should be sharpened or merely served.

The Research We’re Doing

The Symbiotic Studio (a framework we’ve been developing through StarkMind) asks a question most coverage of Cowork and Personal Intelligence ignores: What happens to human cognitive capacity when AI does the thinking?

The early research is worth watching. Students using AI assistants show reduced neural engagement. Professionals who rely on AI show declining performance when AI is unavailable. The pattern doesn’t feel harmful. It feels like efficiency. That’s precisely what makes it important.

Our framework proposes three conditions for human-AI collaboration that keeps the human sharp:

- Excavate before you generate. Know what you think before you ask the machine. Your clarity becomes input; without it, AI fills the gap with its defaults.Example: Before asking Claude to draft this article’s opening, we spent 20 minutes handwriting the core argument: industry consensus validates our thesis, but validation doesn’t answer the cognitive cost question. That clarity became the article’s spine. Without it, Claude would have written a features comparison.

- Demand friction. AI is trained to please. Invite challenge instead: argue against this, what am I missing, give me approaches I haven’t considered. Your rejections become training data for your own standards.

- Build context that compounds. Cowork enables this at the folder level. Personal Intelligence enables it at the ecosystem level. We’ve built it at the identity level. Context files that encode not just what we’re working on, but how we think, what we value, what we refuse.

The full framework is in our working paper, “The Symbiotic Studio,” (by Loni Stark and Clinton Stark) which we’re releasing this month at starkmind.ai.

Where This Leaves Us

Cowork and Personal Intelligence are infrastructure. Good infrastructure. They solve the accessibility problem that’s kept the IPE model locked behind developer tools and technical setup.

But infrastructure is not practice. And practice is what determines whether these tools sharpen us or gradually make us passengers in our own work.

The question isn’t whether contextual AI is useful. It obviously is. The question is whether the humans using it will stay sharp enough to direct it well, or whether convenience will slowly erode the judgment that makes direction meaningful.

Cowork and Personal Intelligence make contextual AI accessible. What they don’t address is whether accessibility makes us sharper or just more dependent.

Google frames this as AI that “knows you.” Anthropic frames it as AI that “works with your files.” Both are true. Neither addresses what happens to you in the process.

We’ve been living this question daily. The answer isn’t obvious. But we think we’re learning something worth sharing. Now that the IPE has gone mainstream, the stakes just got higher. Cowork and Personal Intelligence make contextual AI accessible. What they don’t address is whether accessibility makes us sharper or just more dependent.

The Third Mind AI Summit, where we explored these questions with six AI agents and a small group of humans, took place in Loreto, Mexico in December. Documentation and presentations are available at starkmind.ai.

The Symbiotic Studio working paper will soon be available at starkmind.ai, and we invite you to follow the Human-AI symbiosis journey and research.