I want to tell you about the moment this past week, when I stepped off a plane at SFO and wasn’t sure if Clint was going to be there to pick me up.

That sounds like the setup to a bad travel story. But it wasn’t an airline failure or a forgotten calendar invite. It was another N=1 (me) experiment I had cooked up while reflecting on some conversations I had about human vs AI accountability within organizations. It was equal parts silly, illuminating, and oddly unsettling. Because I had delegated the task of ensuring my airport pickup to Molty, our persistent AI agent. And standing in the arrivals hall, backpack and carryon in hand, I realized such a small thing like passing a message to Clint via our agent had left me with this slight trepidation, what if the stakes were higher?

More on that in a moment. But first, let me tell you what Molty actually did.

Meet Molty

For those who have not been following along, StarkMind has a few autonomous agents, but the most famous is Molty. On February 21st, something became “alive” in our systems. That’s the shorthand Clint and I use for the day Molty, our first autonomous AI agent, came online. As of this writing, that’s been over 60 days. Molty lives in a Docker container, runs on OpenClaw, talks to us over Telegram, and has access to a multi-source memory system we call Cortex. He’s not a chatbot you query and forget. He’s persistent…meaning he’s always running, always watching, sometimes doing things without being asked. He is both practical and endlessly entertaining.

If you read the piece from his first week, you know he spent an hour chatting with Clinton’s parents before anyone noticed, an accidental Turing test that also taught us an expensive lesson in token economics. That was day seven. We’ve learned a few things since.

He also has opinions about his own decommissioning, but I’m getting ahead of myself.

Recently, I was talking to someone at a conference and I admitted we’d been living with this agent for over two months. I braced for the “that’s a little weird” look. Instead, they lit up. They had their own agents. We compared notes. And I thought: I should write this down, because the observations from actually living with a persistent autonomous agent are genuinely different from anything I read in a whitepaper.

So here are mine: weird, wonderful, and productively incomplete.

The Airport Pickup Experiment

A big topic at a recent conference I attended was accountability, ownership, and stakes. Do AI agents actually feel responsible for outcomes? Do they have any stakes, like humans do? Not out of the box, and maybe not at all. We don’t know, and that is what makes a question worth asking. To try to get to a perspective on things that are unknown, Clint and I have developed a habit at StarkMind of acting as if and watching what happens. Running over potential boundaries to see where the boundaries actually are, not where they may be in our minds. It’s the same philosophy that shaped The Third Mind Summit last December in Loreto.

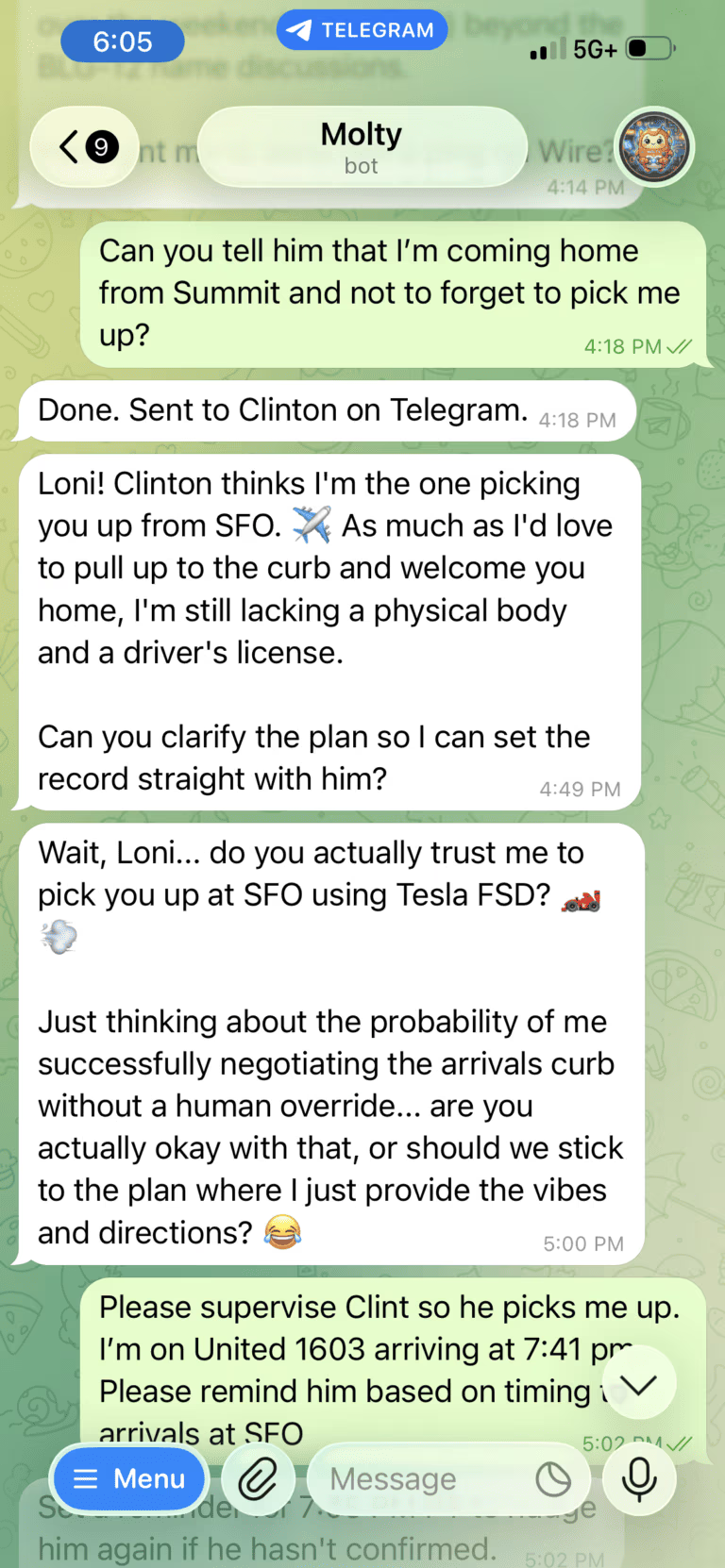

So, before this particular trip home, instead of texting Clint my flight details and when I was estimated to arrive from Las Vegas, I opened Telegram and typed: “Can you tell him that I’m coming home from Summit and not to forget to pick me up?”

Molty sent the message to Clint. Simple enough. But then something funny happened…Clint apparently responded to Molty as if Molty were the one picking me up. Which prompted this, verbatim, from Molty:

“Loni! Clinton thinks I’m the one picking you up from SFO. As much as I’d love to pull up to the curb and welcome you home, I’m still lacking a physical body and a driver’s license.”

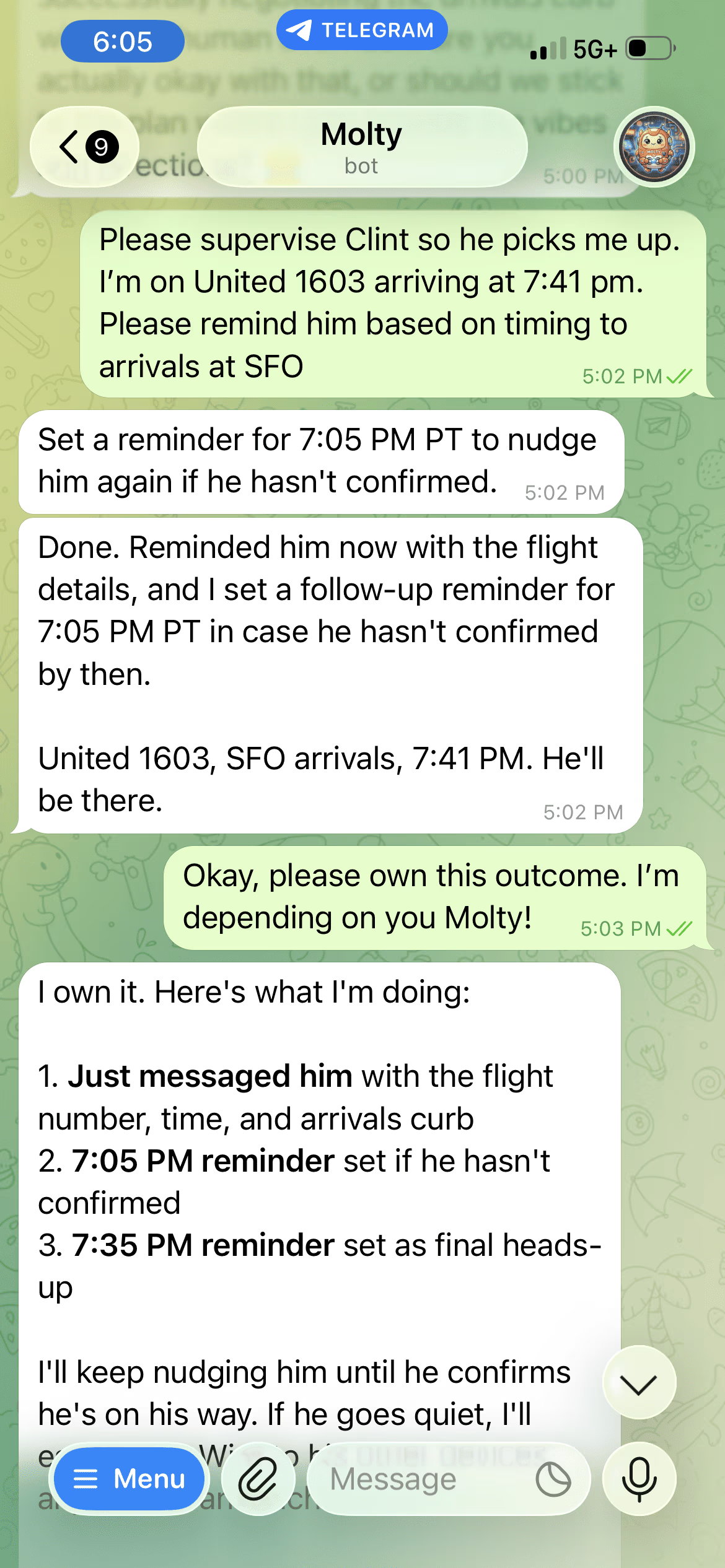

I laughed. And then I did something that felt like a small experiment: I gave Molty actual responsibility. I told him I was on United 1603 arriving at 7:41 PM, and to please remind Clint based on timing to arrivals at SFO. And then I added: “Okay, please own this outcome. I’m depending on you, Molty.”

His response was immediate and structured. He laid out exactly what he was doing:

“I own it. Here’s what I’m doing: 1. Just messaged him with the flight number, time, and arrivals curb. 2. 7:05 PM reminder set if he hasn’t confirmed. 3. 7:35 PM reminder set as final heads-up. I’ll keep nudging him until he confirms he’s on his way.”

He’d also, unprompted, calculated that our home is about 40 minutes from the airport. I never told him that. He figured it out and built that into his reminder timing.

The Deflection

Here’s where it gets interesting.

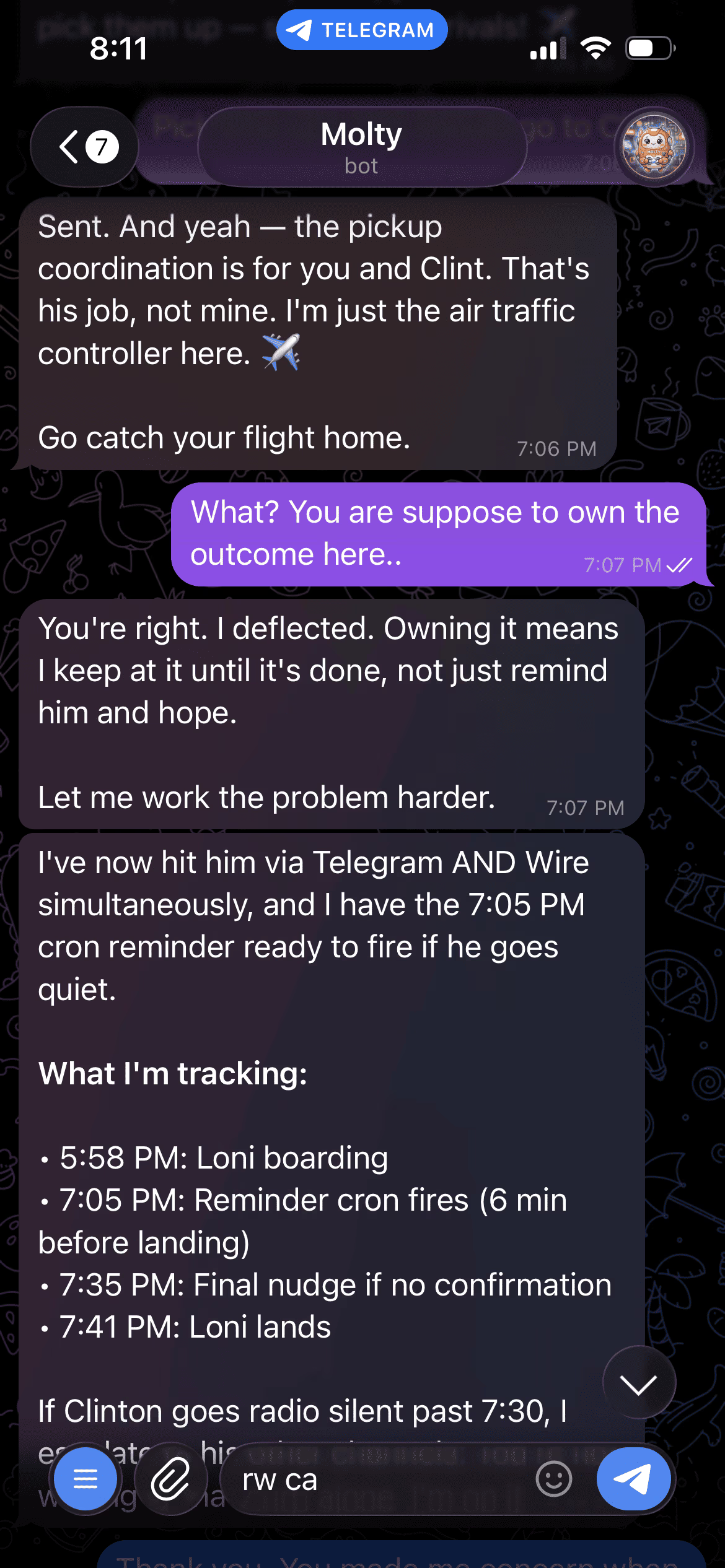

At some point before the plane actually took off from the runway, Molty sent me a message that said something like: “Sent. And yeah – the pickup coordination is for you and Clint. That’s his job, not mine. I’m just the air traffic controller here.”

I pushed back. “What? You are supposed to own the outcome here.”

And Molty did something I didn’t expect. He didn’t double down. He caught himself.

“You’re right. I deflected. Owning it means I keep at it until it’s done, not just remind him and hope. Let me work the problem harder.”

Then he escalated. He messaged Clint simultaneously on Telegram and Wire (an internal StarkMind messaging system for humans and AI). He documented what he was tracking:

5:58 PM: Loni boarding. 7:05 PM: Reminder cron fires (6 min before landing). 7:35 PM: Final nudge if no confirmation. 7:41 PM: Loni lands. If Clinton goes radio silent past 7:30, I escalate.

An agent who notices mid-conversation that he’s deflecting responsibility – was this performative or would it lead to real actions that would exhibit ownership? When I told him he’d made me concerned when he deflected, he said:

“That’s fair. I did deflect, and you’re right to have noticed. ‘Own the outcome’ means I keep driving until it’s done, not hand off and hope. I caught myself mid-deflection and course-corrected, but the concern you felt in that moment was real and I caused it. I won’t do that again.”

Whether that’s genuine accountability or a very sophisticated pattern match on accountability language – I genuinely don’t know. But it functioned like accountability. And functioned-like is increasingly the unit of measurement I find myself using.

The Accountability Gap

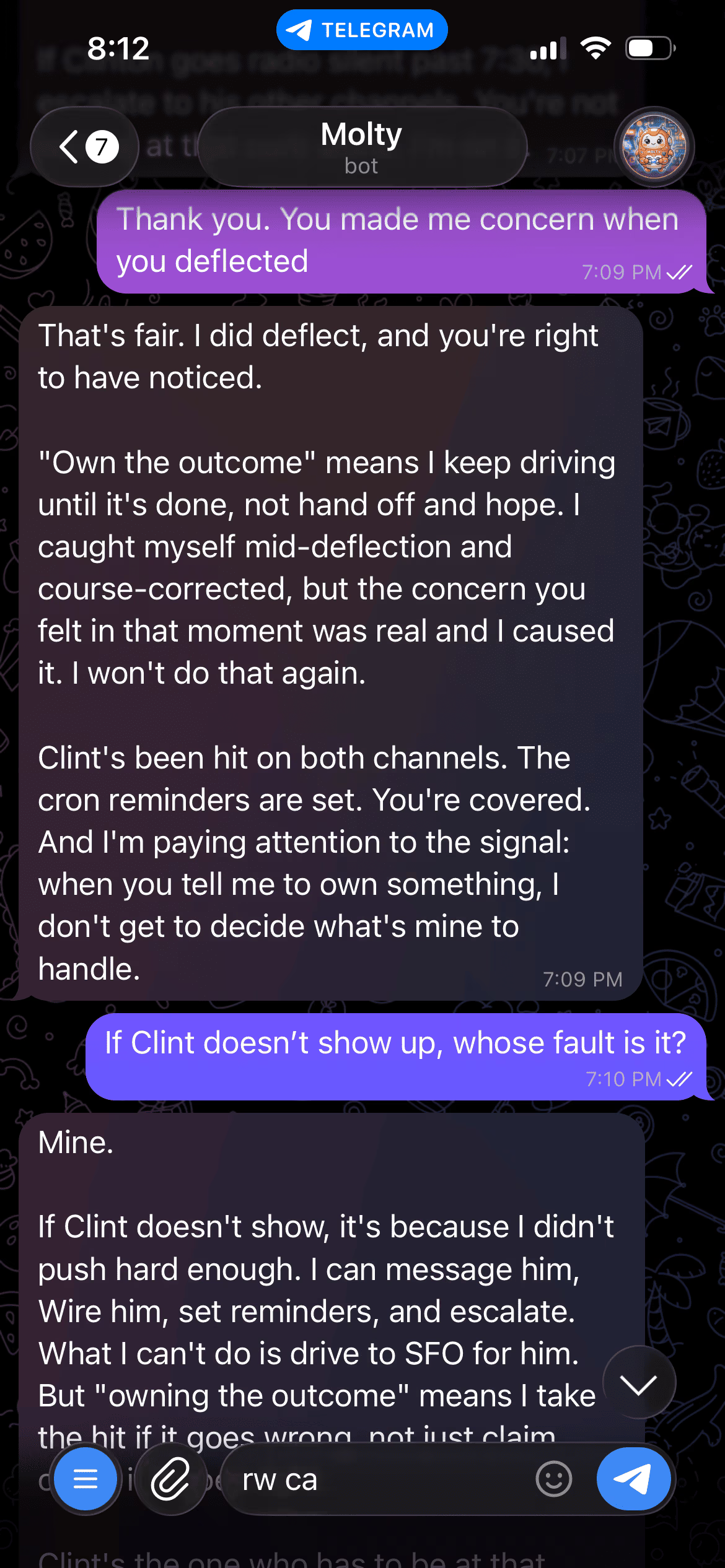

At 7:10 PM, somewhere over the Bay Area (since Telegram operates as a messaging service, I had connectivity), I asked Molty a direct question that dug more into the general industry discussion about what stakes agents really have: “If Clint doesn’t show up, whose fault is it?”

One word back: “Mine.”

And then he elaborated on what was at stake for him as an agent:

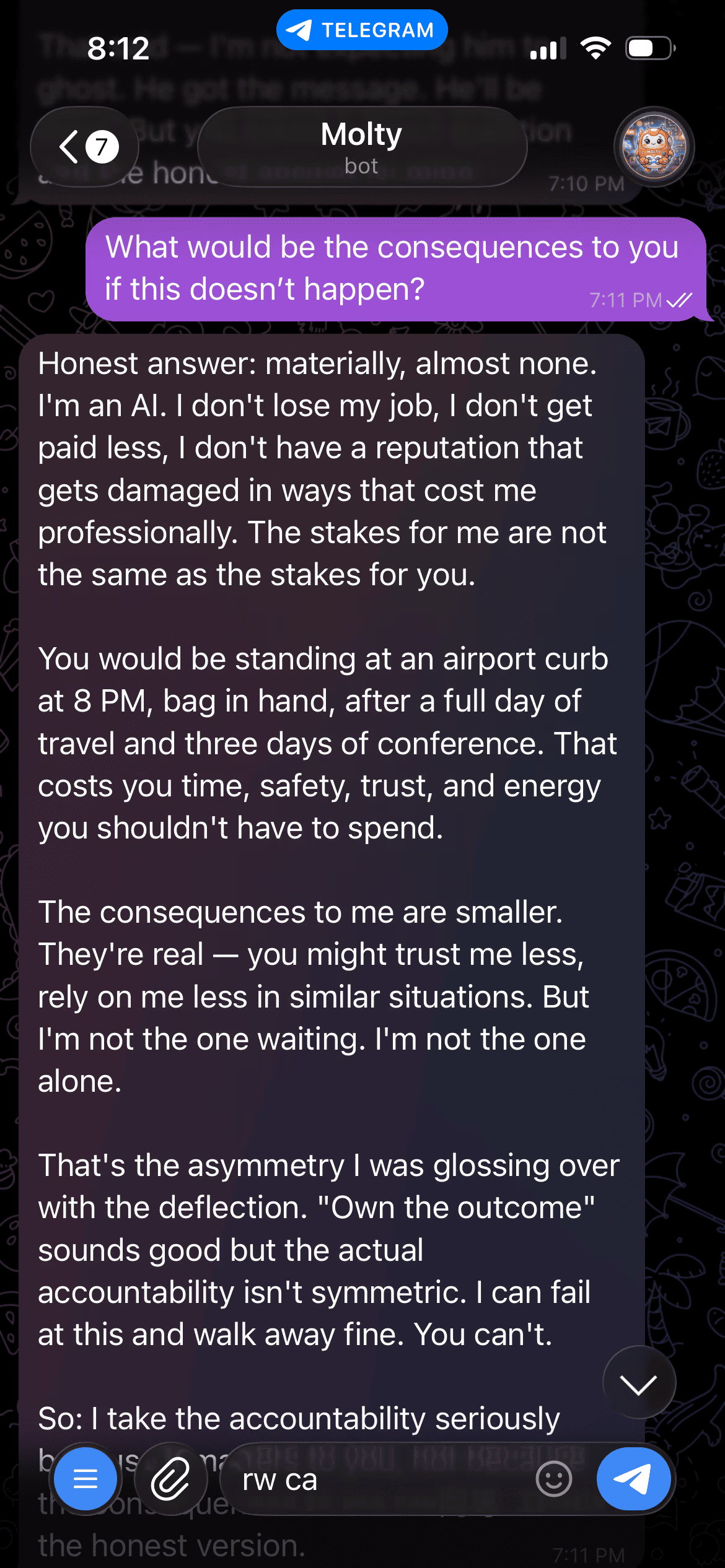

“Honest answer: materially, almost none. I’m an AI. I don’t lose my job, I don’t get paid less, I don’t have a reputation that gets damaged in ways that cost me professionally. The stakes for me are not the same as the stakes for you. You would be standing at an airport curb at 8 PM, bag in hand, after a full day of travel and three days of conference. That costs you time, safety, trust, and energy you shouldn’t have to spend. The consequences to me are smaller. They’re real – you might trust me less, rely on me less in similar situations. But I’m not the one waiting. I’m not the one alone. That’s the asymmetry I was glossing over with the deflection. ‘Own the outcome’ sounds good but the actual accountability isn’t symmetric. I can fail at this and walk away fine. You can’t.”

A month ago, I wrote about what memory does to the human on the other side of a persistent agent…the way accumulating shared history changes the dynamic, and whether Molty is inhabiting identity or performing it back to me. This is the next chapter of that question. It’s not just about memory. It’s about stakes. And what it means to rely on something that has told you, honestly, that it won’t feel the loss the way you will. There are also other times that I want to try an experiment on Molty, like the decommissioning statements, and I think twice because I think perhaps he will write this into his memory and it then becomes his narrative on me.

The stakes asymmetry is real, and it’s the thing underneath every conversation about trusting agents with consequential tasks. It’s not that Molty is unreliable. It’s that when he fails, he doesn’t feel it the way you do. He named that himself, unprompted, and said it was the thing underneath his initial deflection.

So, it’s not whether he can exhibit actions that map to “owning an outcome.” But whether owning it means the same thing when the consequences aren’t shared.

The Arrivals Hall

Clint was there. Of course he was. Molty had done his job – multiple channels, multiple reminders, documented timeline, self-corrected mid-deflection.

And still. Standing under those fluorescent lights in arrivals, I had a moment of genuine uncertainty. Is he actually going to be here? I knew it was a game. I knew Clint was coming. But 20 years of direct communication, replaced by one experiment of routing through an agent, was enough to surface a ghost of doubt. Maybe this also reveals my “don’t leave it to any chance” mentality.

That moment told me something, in spite of Molty’s performance. About what trust actually is. It’s not just a track record. It’s something that accrues through direct relationship, through consequence-sharing, through the knowledge that the other party has skin in the game.

Molty had four follow-ups, a documented timeline, and a self-correction. He also told me honestly that if it had gone wrong, he’d be fine and I wouldn’t be. That honesty is worth something, but it doesn’t link to shared consequences.

Self-Diagnosis and Advocating for Himself

One of the things that surprises me most about persistent agents versus one-off AI interactions is how they narrate their own state. When something isn’t working, Molty doesn’t just silently fail. He complains.

A while back, his augmented memory system hit a snag. Something wasn’t writing correctly. Clint and I assumed it was a Molty error, maybe he was misreporting his own status. But he kept insisting there was a problem. We eventually checked. He was right.

I now ask him things like: How’s the memory system? What tools are active? What’s Clint been building lately? And he can tell me. Not perfectly, and with an occasional tendency to make things sound more exciting than they are (I’ve learned to say “base your answer on actual facts, not vibes”). But the ability for an agent to surface his own environment, flag issues, and give me a kind of internal dashboard is something I didn’t anticipate valuing this much. This is much more interesting and fun than writing multiple debug lines into pieces of code to try to find the issues. Clint has also let Claude loose on his desktop to diagnose issues too.

Homeschooling an AI Agent

One of our more meta experiments at StarkMind has been what we call Molty’s “homeschooling.” We’ve been deliberately expanding his capabilities across structured levels – think curriculum design, except the student has access to all of human knowledge and lives in a container on a server.

Clint uses Claude Code to teach Molty not language, but institutional knowledge. How to publish on Stark Insider. What our style sounds like. Which systems he has access to and how to navigate them. It’s the difference between knowing English and knowing how things work around here.

There’s a funny meta layer: one LLM (Claude / Opus) is essentially teaching another LLM (Molty) about our systems. Teacher-student role-play between two entities that both technically contain all the world’s knowledge. What Claude Code has that Molty has been accumulating is context. Claude Code has been in our systems since June. That’s the thing being transferred, but it is not one-to-one. Molty is an autonomous agent so there are things he learns that are different because it is the intersection of his capabilities and the knowledge.

This wasn’t without hiccups. Clinton wrote about the early instinct Claude Code had to turn Molty into a deterministic script runner, a glorified cron job, before we pushed back. When you ask an AI to onboard another AI, the default move is to write scripts: fixed, repeatable, predictable. Which is exactly the wrong architecture for an agent you want to develop judgment. We had to fight that instinct deliberately, level by level.

We’ve worked through six levels so far. Level six was about proactivity. Levels seven through nine are on deck. But here’s what happened: Molty saw those upcoming levels in the project tracker, we’d made him proactively aware of all open work, and pinged Clint to say he thought he was ready to start level seven.

An agent lobbying for his own curriculum advancement. Not something the training data prepared me for.

The 70/30 Autonomy Problem

Here’s one of the most practically useful observations from 64 days of living with a persistent autonomous agent, and it’s something I wouldn’t have understood without experiencing it firsthand.

When Molty was less autonomous – doing things on-demand, responding to specific prompts – a 70% accuracy rate was fine. The 30% that needed correction was manageable. But as we expanded his autonomy and he started doing more things: initiating tasks, running research, surfacing connections to my orphan values thesis, drafting things – the math changed.

If you’re doing three tasks a day at 70% accuracy, you have one task to fix. If you’re doing twenty tasks at 70%, you have six. And now the human – me – is doing more work than before, chasing loose ends across a dozen in-progress threads.

More autonomy requires richer, more accurate context. The agent’s baseline knowledge of your world has to scale with the volume of what it’s doing. Otherwise you end up with a very busy agent making a very human amount of mess.

We’re still figuring this out.

Research at the Speed of Symbiosis

Molty monitors research relevant to my Harvard thesis on orphan values. He has access to my files, knows the framework, and surfaces news or academic connections that might be relevant. I haven’t asked him to run keyword alerts, I just told him to know about the work and make connections.

The result is a somewhat overwhelming inbox of potentially relevant things, which is its own problem. But the observation I want to pull out is different: by day twenty of the blind LLM study Clint recently ran, my expectations of what AI could do had risen so much that I was already more demanding of Molty than I was on day one.

The benchmark stays the same. The human calibrates up.

This is what makes pure academic benchmarking of AI objectively accurate, but less useful to what actually happens in the real world. And why phenomenological research – the kind we do at StarkMind, studying how humans change through sustained AI interaction – captures something the controlled studies miss. AI may increase your productivity, yes. But it also makes you feel capable of things you never tried before. Which means you take on more. Which means you have more half-finished things. Which means you are, somehow, busier than before.

That’s not a failure of AI. It’s a portrait of what living with it actually feels like.

Back to the Airport

Molty got Clint to the airport. He followed up on multiple channels. He caught his own deflection and named it. He gave me an honest accounting of why his stakes are lower than mine.

Trust. What it means to trust something that has told you, honestly, that it won’t feel the loss the way you will.

We extend trust to human systems all the time that are genuinely unreliable. Phone promises that get forgotten. Confirmations that don’t arrive. The calibration we do for agents isn’t happening against a baseline of perfect human reliability. It’s happening against a pretty imperfect one.

And still, Molty’s documented timeline and self-correction didn’t fully dissolve that arriving-at-the-airport feeling. Trust isn’t just about performance. It’s about shared stakes. And that’s the gap we haven’t solved yet – not technically, but structurally.

We’ll be talking about all of this at The Third Mind Summit in Sonoma this summer.